Non-Invasive Evolutionary AI-Powered Communication for ALS Patients

Background: A Critical Healthcare Challenge

Amyotrophic Lateral Sclerosis affects approximately 33,000 Americans at any given time, with roughly 6,000 new diagnoses each year. As the disease progresses, patients lose the ability to speak and move. Cognitive function and eye control, however, typically remain intact. The result is a devastating form of isolation: people who can think, feel, and understand become locked inside bodies that can no longer communicate.

Current brain-computer interface solutions involve difficult tradeoffs. Invasive systems require neurosurgical implantation, which carries real risk. Non-invasive approaches have historically been limited to simple binary classification, yes or no, which falls far short of expressing the full range of human needs. Basic care requests, emotional states, medical concerns, none of these are adequately captured. On top of that, generic synthetic voices strip away the personality and warmth that shape how we connect with the people we love.

The need for a comprehensive, non-invasive communication system that preserves both capability and identity is urgent and growing.

Our Solution: Multimodal AI for Complete Communication

We have developed a system that integrates three complementary technologies: brain-computer interface, eye-tracking, and neural voice synthesis. Together, they maintain communication throughout every stage of ALS progression.

The underlying quantum-inspired deep learning architecture achieves 95.42% classification accuracy across nine distinct communication categories. It processes EEG brain signals and eye-tracking data simultaneously. For context, existing non-invasive systems typically handle 2 to 4 classes. This is not an incremental improvement. It represents a fundamental expansion of what non-invasive BCIs can accomplish, validated on the EEGET-ALS benchmark dataset.

No surgical implantation is required. No cloud connectivity. No institutional infrastructure. The system runs entirely on portable hardware, including solar power, and adapts automatically as the disease progresses. In early stages, motor-imagery input dominates. In advanced stages, the system shifts to eye-gaze input. Communication remains continuous from diagnosis through locked-in state.

Why This Matters: Real-World Impact

For Healthcare Providers:

Non-invasive assessment uses standard 19-channel EEG and camera-based eye-tracking. Nine communication categories cover basic needs, medical concerns, emotional states, and system navigation. Real-time classification runs with less than 50ms inference latency, and objective measurements reduce the interpretive burden on clinical staff.

For Patients and Families:

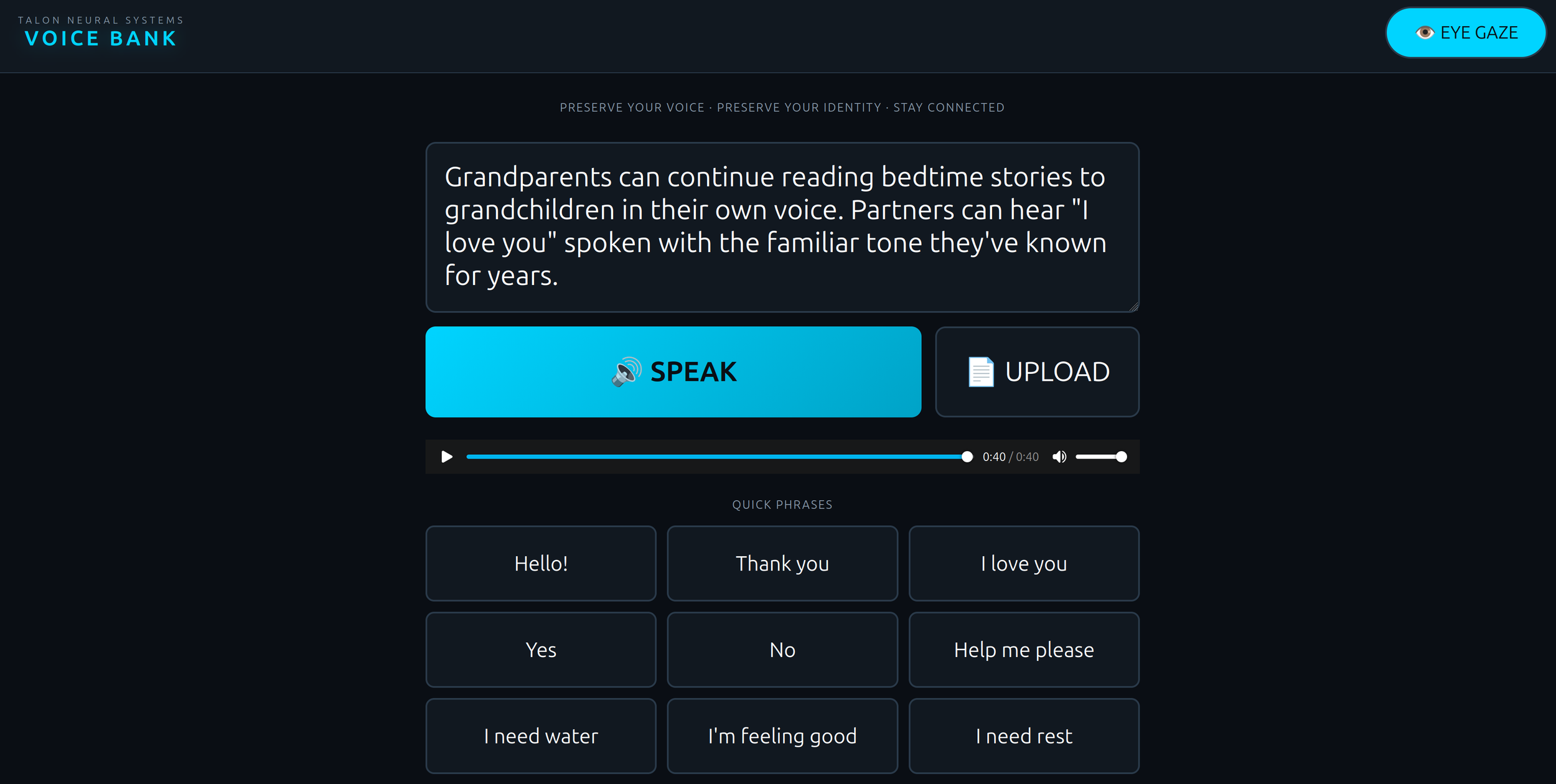

Voice preservation captures and reproduces the patient's own voice from as little as 30 seconds of recording. Communication adapts as motor function changes, so patients are never left without a voice. Smart home integration allows independent control of lighting, temperature, entertainment, and safety systems. And parametric voice control means words still carry feeling. Not monotone synthetic speech, but something closer to the real person.